AI and the Uncertain Future of Work

History suggests artificial intelligence will further degrade the dignity of labor, not unleash an age of plenty or an abundance of quality jobs. What happens when Frederick Taylor's "scientific management" is updated for the age of AI? Images: Wikipedia & Adobe

What happens when Frederick Taylor's "scientific management" is updated for the age of AI? Images: Wikipedia & Adobe

How will artificial intelligence impact the quality of our jobs? Will we have any jobs left? These questions seem to be on the lips of every pundit, but are they the right ones? To understand the future course of work, theorizing about AI breakthroughs may tell us less than revisiting the world’s first management guru.

If a time machine were to whisk us back to the shop floor of Philadelphia’s Midvale Steel Works in the late 1880s, we would find a man they call “Speedy Taylor,” stopwatch in hand, shouting instructions to a lathe operator. The man with the stopwatch is hellbent on breaking the metal cutter’s job down into constituent parts, using time and motion studies to track each element, no matter how miniscule, and ferret out wastefulness. His goal is to harness the full power of efficiency in the American factory.

In subsequent decades, Speedy’s full name, Frederick Taylor, will become famous (and infamous) as a synonym for “scientific management.” As Midvale’s chief engineer, Taylor developed strict rules for workers to follow as they executed repetitive tasks at piece-rate pay (pay for each unit produced). In Taylor’s view, the worker’s job was to get tasks done as quickly and efficiently as possible; the manager’s job was to maximize profits for the brass. Rationalizing productivity accomplished both. To oversee his Gilded Age revolution in factory management, he invented a new position on the floor: the “speed boss.”

A key feature of Taylorism, as scientific management came to be known, was the transfer of skills from machinists to managers, what some economic historians call “deskilling.” Machinists, previously in charge of the shop floor, would now be disciplined and humbled. They would do the doing; managers, the thinking. Not surprisingly, workers and labor advocates were not fans of Taylorism. They complained that it turned human beings into machines — a critical view that enjoyed support among some factory owners. British business theorist and chairman of Cadbury Brothers, Edward Cadbury, felt that Taylor’s intrusive, controlling methods would make industrial labor, already wearying enough, downright dehumanizing. Stripped of what made any job rewarding — creativity, autonomy, the ability to interact socially — employees would then become demoralized, possibly undermining the goal of greater productivity. For their part, workers at Midvale Steel Works broke the company machines in protest. Put that in your time and motion study!

In Taylor’s view, the worker’s job was to get tasks done as quickly and efficiently as possible; the manager’s job was to maximize profits for the brass.

Nearly 150 years later, there is controversy among historians over whether it ever worked, though it certainly caused considerable labor unrest. Taylor, who went on to make hefty fees as a management consultant, has been accused of selling snake oil rather than science, exaggerating the results of his studies. As historian David Noble points out, his system was too rigid and failed to account for the changing variables of work. Armed with Taylor’s principles, managers thought they could vanquish labor once and for all. But workers found ways to skirt the new time and motion requirements, and generally disregard methods that got in their way.

Taylor’s system may not have worked, but he left a major mark on American business culture. The business consultant Peter Drucker lauds Taylor as the “Isaac Newton (or perhaps the Archimedes) of the science of work.” Indeed, his principles provide the intellectual foundation for the American business school; the MBA is a Taylorist legacy. But his legacy extends beyond the university. As historian Jill Lepore points out, “modern life has become Taylorized.” Time is money! Managers never gave up the idea of inducing people to act like machines, and maybe someday ditching humans altogether. With AI, some worry that someday has finally arrived.

Historically, experts have been divided as to whether technological advances displace more jobs than they create. The same argument is now taking place in the dawn of AI. The MIT economist David Autor predicts that AI could boost workers without college degrees, allowing them to do higher level jobs and at better pay. The less educated employee challenged to write a polished document, for example, can now produce one with AI’s assistance. On the other hand, Harvard economist Lawrence Katz warns of an AI-fueled jobs wipeout, while Joseph Fuller of Harvard Business School gives notice that knowledge workers, traditionally more insulated from automation, are skating on thin ice. Some CEOs emphasize the jobs threat, though doing so is one way to get workers to lower their expectations. Take any job while you can still get one!

It’s true that technologies like AI can save labor and allow companies to ramp up production without adding hires — or to shrink the workforce. As an extension of traditional Taylorism, AI can be deployed to automate repetitive tasks to improve efficiency; standardize workflows (customer service processes, etc.); replace physical labor (AI-assisted robots, self-driving cars) and monitor the workforce. The new wrinkle is that AI can analyze data and make decisions to improve productivity, performing specific tasks at or above the level of the human brain, which could result in deskilling on steroids. Yet somebody has to produce, audit, oversee and repair all the wondrous machines and programs. When it comes to training AI to properly interpret and learn from data, make that millions of (likely unhappy) somebodies.

Journalist Josh Dzieza describes the hidden global army of “annotators” who tag and sort the sea of data required to train AI programs. Picture a person in a faraway country, working alone, on the cheap, labeling images of humans and bicycles so that Uber won’t kill you with a self-driving car, as happened to a pedestrian in a 2018 test in Arizona. Dzieza tells of workers toiling under punishing schedules, performing mind-numbingly tedious tasks and losing pay if the tracker that logs their every move registers too few mouse clicks. He acknowledges that AI is creating jobs alright — jobs that amount to a “vast tasker underclass.” It starts to sound like a new form of Taylorism, only now the workers never see the managers, if they even know who they are.

Economist Nadia Garbellini of the University of Modena in Italy is less concerned about a jobs apocalypse than a boom in godawful jobs. She told me that AI could usher in “a world with millions of underpaid, ignorant, politically naive, isolated workers, stuck at home in front of their computers in both work and leisure time, producing goods and services they cannot afford to buy.” Not quite the rosy picture AI promoters promise. Less drudgery! More leisure time! Rising living standards for all!

It starts to sound like a new form of Taylorism, only now the workers never see the managers, if they even know who they are.

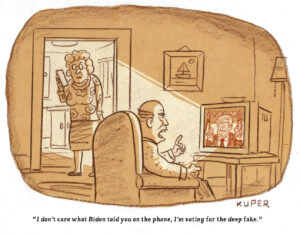

Like the machinists in Taylor’s time, today’s workforce copes with technologies that don’t always function as advertised. Large language models (LLMs) like ChatGPT are supposed to come up with text that sounds reasonable. But not only are they error-prone, they tend to “hallucinate” when lacking data, or, as presenters at a recent GlueCon tech conference put it, “generate reasonable-sounding bullshit,” digital versions of the blustering guy at the end of the bar. Sometimes, errors are easily corrected. Other times, as the self-driving car crash demonstrates, mistakes can be deadly.

One thing that AI does very well is what workers detested about Taylorism: it can watch us. In his history of workplace surveillance, historian Robert D. Sprague explains how electronic monitoring in the workplace became all the rage as the office replaced the factory. Again and again, business needs took precedence over employees’ expectation of privacy in the eyes of the law. It’s worth recalling that philosopher Jeremy Bentham’s Panopticon, the famous prison designed to let guards constantly monitor inmates and later a symbol of social control, was originally meant for the factory, not the prison. As the Industrial Revolution moved employees out of small workshops to far-flung factories, employers fretted about keeping an eye on them. Samuel Bentham, Jeremy’s brother, tried to solve the problem in Russia, where he oversaw several workshops, by conceiving a factory where the boss could watch the workers, but the workers couldn’t see the boss.

AI presents a new horizon for the all-seeing eye. Alarmed by the work-from-home trend sparked during the COVID-19 pandemic, companies are aggressively adopting AI-powered software to track where workers are and what they’re up to. In August 2023, The Financial Times reported that Amazon was using employee identification badges to track the attendance of U.S.-based workers and nail those who didn’t comply with its three-days-in-the-office policy. Reuters reports that AI is enabling companies to monitor employees at “unprecedented rates,” from tracking keystrokes to using software to monitor a worker’s eyes to show whether they are looking at the screen — often without the person’s awareness.

In 2020, the European Commission issued a report on AI’s impact on workers. Interviews conducted with Italian metalworkers revealed complaints of less autonomy; an overwhelming pace of work; more difficulty organizing (they worked outside the production site and had less chance to interact with one another); and intrusive monitoring. The University of Manchester’s Barbara Ribeiro conducted research showing that bringing automated processes in science labs made the work more complex and generated an array of new, mundane tasks. Scientists had to run more experiments and the robots had to be checked, trained and repaired. Ribeiro noted that the scientists doing all this extra work “were not better paid or more autonomous than their managers” and felt their workload more burdensome than those higher in the job hierarchy. Meanwhile, in American offices, employees in the publishing industry report that AI has made their work more onerous because they have to wade through piles of appalling AI-generated submissions.

What about the shared prosperity promised by AI boosters? Don’t hold your breath. Around 79% of U.S. workers fear that adopting AI will result in pay cuts, according to a recent survey. They’re right to worry: Forbes magazine declares that AI is already causing a massive decrease in wages. Increased productivity may generate profits for the executives, but history suggests that workers do not necessarily gain — something those in Taylor’s system understood with their piece rate pay. Since the 1970s, the U.S. workplace has seen enormous technological advances, but despite leaps in productivity, wages have stagnated, or even fallen.

Does AI ever make work better? Perhaps for some. In the white-collar world, the U.S. insurance industry has been undergoing what a McKinsey report describes as a “seismic, tech-driven shift.” The idea is to use AI to analyze piles of data on a claimant’s past history, credit scores and even social media activity so that claims-handlers can evaluate risk more accurately. In a breathless evocation of the AI future, McKinsey predicts that by 2030, advanced algorithms will handle the drudgery of processing claims while AI-powered monitoring systems will track policyholders in real time (as we know from Google, monitoring is not just for employees, but customers, too). Did you just make a driving error? A text will tell you your premium was just raised 2% (presumably if you wish to complain, a chatbot is available). Freed of the tiring claims processing, agents will spend more time selling products, using AI-enabled bots to make customer interactions “shorter and more meaningful.” Already, an AI product called “Navya” aims to help health insurance employees save time by guiding customers in choosing health benefits plans. “Hi Marley” promises to help insurance agents provide more “loveable” communications with policyholders through real-time coaching. “Sproutt” offers AI-powered assistance to brokers and agents in matching individuals with life insurance plans.

A recent working paper published by the National Bureau of Economic Research (NBER) by economist Erik Brynjolfsson and colleagues studied how AI-based conversational assistants impacted contact center agents (the people who talk to customers) at a software company. They found that productivity went up by an average of 14%, with newbies and low-skilled workers gaining the most. The researchers concluded that the AI model helped spread the knowledge of more able workers to newer and less experienced ones, enabling them to learn faster. AI technology also improved employee retention. (Professor Nadia Garbellini notes that the study isn’t properly representative. It is also focused mostly on workers based outside the U.S.)

Around 79% of U.S. workers fear that adopting AI will result in pay cuts, according to a recent survey.

Some agents may indeed like using AI. But retention becomes meaningless when there aren’t any jobs. Sixty-two percent of insurance companies have reportedly cut jobs globally due to AI, and experts warn that AI is coming first to decimate low-level call center jobs.

AI may also create ethical dilemmas, particularly in areas like health care, where putting profits over patients has long been a problem. The aggressive entry of private equity into medicine is a particular cause for worry. Doctors like Ming Lin warn that private equity firms’ focus on the bottom line increases the denial of care, the replacement of medical staff with less qualified personnel, and intimidation of those who speak out. (Lin ought to know. He’s involved in a lawsuit for wrongful termination after being fired from TeamHealth, owned by Blackstone, for voicing safety concerns). Health care practitioners are pressured to violate their medical ethics, and AI can amplify the problem. The Boston Globe reports of how an algorithm wrongly assessed a woman’s medical condition and kicked her out of a nursing home. Her Medicare Advantage insurer, Security Health Plan, ignored medical notes and let the program make the call. According to the report, AI is driving up denials in Medicare Advantage, which more than 31 million people depend on.

Technology arises out of societies and reflects their conditions. Conditions, at the moment, are hardly reassuring. Excitement about AI tempts people to disregard the fact that over the last 50 years, work has gotten more precarious and less rewarding for most. The economy is top-heavy, which helps explain how companies plan to use AI on their workforce and why regulators and politicians aren’t doing much to stop it.

Employees in the U.S. are the most overworked in the developed world. A secure, full-time job with decent benefits is a pipe dream for the majority. Unions are weakened and the regulation of businesses wholly inadequate. Profits trickle up to shareholders: Since measurements began in 1947, labor’s share of national income has dropped to unprecedented lows. The formation of monopolies is thought to be even more harmful to workers than consumers, holding down wages, and the U.S. has a serious monopoly problem. AI could set the stage for still more concentration of industrial power because the little guys can’t afford the enormous expense of building and training AI programs. After decades in which wages have lagged productivity, do we really expect those at the top to graciously concede that workers share in the benefits of AI?

In the U.S., labor voices in AI discussions have been limited, and companies like Amazon already using AI to bust unions. Yet the historic Hollywood double strike emerges as a hopeful exception. As writers fight to protect their credits in AI-assisted projects and actors demand control of their likenesses, WGA and SAG-AFTRA are sparking unionization movements in other parts of the industry, like reality TV. The strikes have enjoyed the support of most Americans, even while political support has been lackluster. This situation underscores the need for workers not to wait for politicians to put AI guardrails in place but to get busy demanding it themselves. Labor unions can still be a powerful force, but contingent, temporary and gig economy workers will have to find creative new ways to organize.

This situation underscores the need for workers not to wait for politicians to put AI guardrails in place but to get busy demanding it themselves.

The future of AI carries a range of possibilities, depending on the response of governments and the power of workers to challenge corporate dominance. But the preponderance of historical evidence suggests that, on its current path, AI will not improve working conditions for most. Economist and business historian William Lazonick told me that we should be worried less about the new Taylorism and more about the old neoliberalism. In his view, workers have been continually beaten down since the 1980s, when America’s business culture fully embraced the pernicious idea that companies should be run solely to enrich shareholders — not employees, customers, taxpayers or any socially useful purpose. “The jobs apocalypse already happened,” said Lazonick.

A better workplace isn’t about technological advancement. It’s about human fulfillment and people having a say in guiding choices about the technologies that affect their lives and livelihoods. It’s about making corporations pay their fair share of taxes to support the common good and banning them from diverting profits to stock buybacks when they could use the resources to retrain workers displaced by AI. It’s about demanding that governments lead the way in creating training programs to ease the transition and holding business accountable for how they deploy technology.

AI is not going to replace us. For better or worse, it is us.

Your support matters…Independent journalism is under threat and overshadowed by heavily funded mainstream media.

You can help level the playing field. Become a member.

Your tax-deductible contribution keeps us digging beneath the headlines to give you thought-provoking, investigative reporting and analysis that unearths what's really happening- without compromise.

Give today to support our courageous, independent journalists.

You need to be a supporter to comment.

There are currently no responses to this article.

Be the first to respond.